Computer Visionbeginner

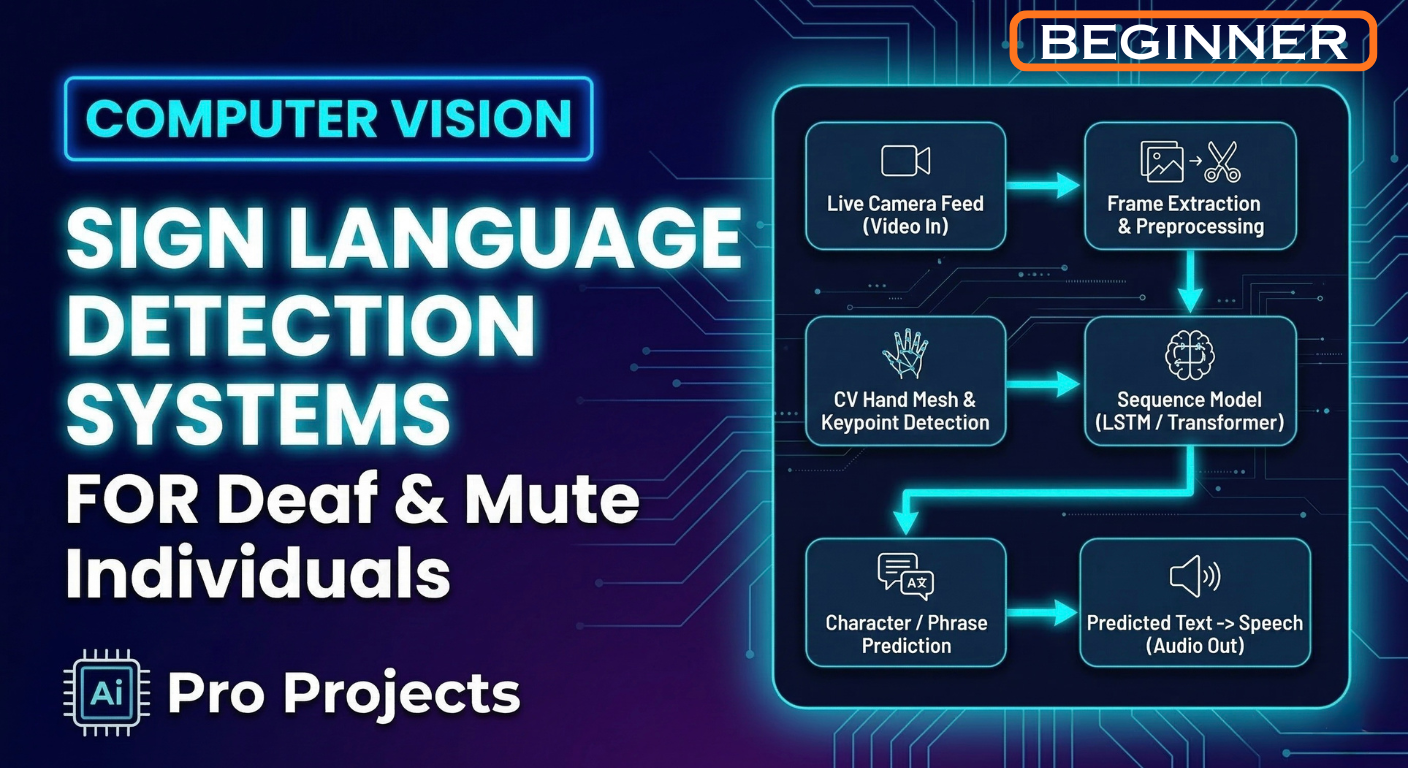

Sign Language Detection Systems For Deaf And Mute Individuals

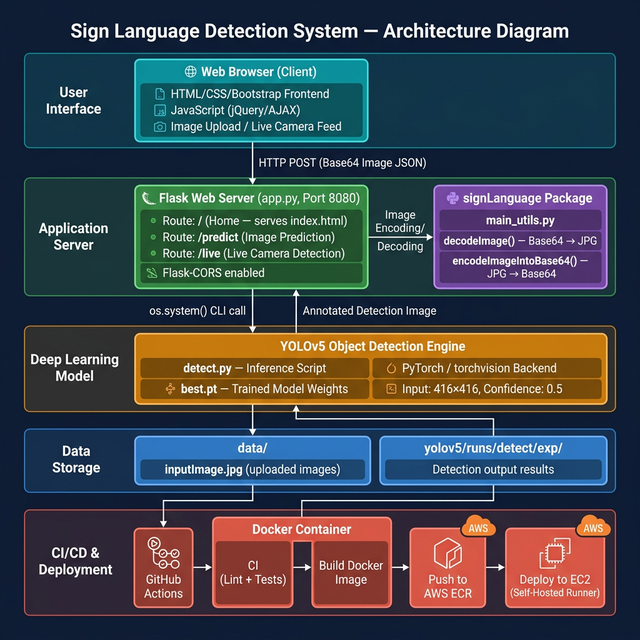

The Sign Language Detection System is an end-to-end deep learning application designed to bridge the communication gap between deaf/mute individuals and the hearing community. The system uses YOLOv5 (You Only Look Once v5) — a state-of-the-art real-time object detection model — to recognize and classify hand gestures representing sign language symbols from images or live camera feeds. The application is served via a Flask web server and is deployed to AWS using a fully automated CI/CD pipeline powered by GitHub Actions and Docker*

10 lectures

Project Instructor

Boktiar Ahmed Bappy

5+ years exp

Premium

One Subscription. 40+ Projects. Unlimited Access.

AccessMobile & Web